TL;DR: With one prompt, I built an implementation of IndexedDB using Claude Code and a Ralph loop, passing 95% of a targeted subset of the Web Platform Tests, and 77.4% of a more rigorous subset of tests.

When I learned that two simple browser engines had been vibe-coded, I was not particularly surprised. A browser engine is a well-understood problem with multiple independent implementations, whose codebases have no doubt been slurped up into LLM training data.

What did surprise me is that neither project seemed to really leverage the Web Platform Tests (WPTs), which represent countless person-hours of expertise distilled into a precise definition of how a browser should work, right down to the oddest of edge cases. (The second project does make partial use of WPTs, but it doesn’t seem to be the primary testing strategy.)

LLMs work great when you give them a clear specification (or PRD) and acceptance tests. This is exactly what the web standards community has been painstakingly building for the past few decades: the browser standards themselves (in plain English as HTML files) and the WPTs. The WPT pass rate in particular gives you a good measure of how “web-compatible” a browser is (i.e. can it actually render websites in the wild). This is why newer browsers like Ladybird and Servo heavily rely on it.

I don’t have the patience (or cash) to build an entire browser, but I thought it would be interesting to build a single browser API from scratch using a single prompt, and to try to pass a non-trivial percentage of the Web Platform Tests. I chose IndexedDB because it’s a specification that I’m very familiar with, having worked on both PouchDB and fake-indexeddb, as well as having opened small PRs and bugs on the spec itself.

IndexedDB is not a simple API: it’s a full NoSQL database with multiple key types (including array keys and arrays-as-keys), cursors, durability modes, transactions, scheduling, etc. If you build on top of SQLite, then you can get some of this stuff for free (which is probably why both Firefox’s and WebKit’s implementations use it), but you still have to handle JavaScript object types like Dates and ArrayBuffers, JavaScript-specific microtask timing, auto-transactions, and plenty of other idiosyncrasies.

The experiment

So here was the experiment:

- Create a repo with submodules containing both the Web Platform Tests and IndexedDB specification.

- Tell Claude (in plan mode) to create a plan to build a working implementation of IndexedDB in TypeScript and Node.js on top of SQLite, passing >90% of the tests.

- Plug the plan into a Ralph loop so multiple agents can iterate sequentially on solving the problem.

- Go to sleep and wake up the next morning.

If you’re not familiar with the so-called “Ralph Wiggum” technique, it’s dead simple: run Claude in a Bash loop, giving it a markdown file of instructions and a text file to track its progress. (That’s literally it.) The main insight is to avoid context rot by frequently starting a brand-new session. In other words: the LLM gets dumber the longer the conversation goes on, so have shorter conversations. I used Matt Pocock’s implementation (which is literally 24 lines of Bash) in --dangerously-skip-permissions mode, in a Podman container for safety.

The project completed in a few hours of work, and the agent decided to disobey my instructions and pass well over 90% of the target tests, reaching 95%. (Naughty robot!) Note that it omitted some tests because they weren’t deemed appropriate for a Node.js environment, but it still amounts to 1,208 passing tests out of the 1,272 target subset.

Here was the prompt. You’ll note I had some typos and grammatical errors (e.g. I meant instanceof, not typeof), but the agent still figured it out:

Click to see prompt

Help me plan a project. You have the entire IndexedDB spec and web-platform-tests checked out in git submodules.

Here’s the project: build a TypeScript-based project that implements IndexedDB in raw JavaScript (no dependencies) on top of SQLite (so okay, SQLite is the one dependency). You should try to pass at least 90% of the IndexedDB tests from WPT.

Stipulations:

- Use TypeScript and run in native Node (you have Node v24 already installed which supports TS out-of-the-box). Use tsc for linting though

- Write tests using

node:test

- You must run the WPT tests UNMODIFIED in Node. To achieve this you will no doubt have to use some shims since the tests were designed to run in the browser, not Node. But as much as possible, you should prefer built-ins. Node supports a lot of built-ins now like Event and EventTarget so this shouldn’t be super hard.

- You should start first by setting up the basic project scaffolding and test scaffolding. To start, try to get ONE test passing, even if you have to do a basic pure-JS implementation of IndexedDB (i.e. a “hello world”) to get that to work.

- You should store some of these basic stipulations and project structure in CLAUDE.md as you go for the next agent. E.g. how to run tests, how to lint, etc.

- Your implementation should ultimately store data in sqlite. You should use the better-sqlite3 package for this. Again, no dependencies other than this one. (You may have as many devDependencies as you want, e.g. typescript)

- We’re building a plan, and I want this plan to encompass everything that’s needed to get to roughly 90% test coverage. To do so, we should probably divide up the PRD into some subset of tests that make sense to tackle first, but we can leave it up to future agents to change the order if it makes sense

- As much as possible, try to make your implementation JS-environment-agnostic. We’ll be running in Node, but if someday we want this running in a browser on top of SQLite-on-WASM then that shouldn’t be impossible. Your test harness code can have Node-specific stuff in it if necessary, but the actual library we’re building should strive to be agnostic.

- In the end, your test suite should have a manifest file of which tests are passing, failing, timing out, etc. This will be a good way to judge progress on the test suite and give guidance to the next agent on what to tackle next. Ideally this manifest file will have comments so that agents know if certain tests are tricky or outright impossible (toml or yaml may be a good format).

- You’re running in a sandbox with sudo so if you need to install some tool just do it.

- The project is complete when you reach 90% test coverage on the IndexedDB tests in wpt. Note that this number should be based on the number of passing tests, not the passing test files.

- Your test script should OUTPUT the manifest of passing/failing tests. This allows the next agent to know which tests are passing/failing WITHOUT having to actually run the tests (which takes time). You should also commit this manifest file whenever you commit to git.

- For simplicity, your tests should use sub-processes/workers for isolation rather than any kind of vm technique since this can introduce JavaScript cross-realm issues (e.g.

typeof Array not being right).

- For the purposes of this project, “one task” should be considered to be ONE TEST (or maybe two) at a time to keep things simple. Don’t try to bite off huge entire feature of IndexedDB (e.g. cursors, indexes, etc.) and instead try to break work up into small chunks.

- The main goal of this project is to be spec-compliant, but being performant is great too. Try to leverage SQLite features for maximum performance (and don’t fake it by doing things in raw JavaScript instead). If a task is just “improve performance” then that’s fine.

And here is the project itself.

If you can’t tell from the git history, the hardest part was just keeping the loop running. Despite the relentlessness of the Bash loop, Claude Code kept occasionally erroring out with:

Error: No messages returned

at FKB (/$bunfs/root/claude:6151:78)

at processTicksAndRejections (native:7:39)

This seems to be a bug. Annoying, but not a dealbreaker since I could just restart the loop when it crashed. So it didn’t finish “overnight,” but it was done by the time I finished breakfast.

Evaluating the code

Looking at the project structure, it’s pretty straightforward and the files have familiar (to me) names: IDBCursor.ts, IDBFactory.ts, etc. This isn’t surprising because it follows the spec naming conventions, as well as the patterns of projects like fake-indexeddb (which I’m sure was part of the LLM training data). The test harness has to shim some browser APIs like window.addEventListener and ImageData to get certain tests to pass, which is exactly what we did in fake-indexeddb as well.

According to cloc, the src directory is 4,395 lines of code. Looking through some of the bits that I knew would be challenging, like event dispatching, I wasn’t surprised to see that it took a similar strategy to fake-indexeddb, shimming the event dispatch / listener logic rather than relying on the Node.js built-ins. (This is really not straightforward!)

Interestingly though, it deviated from fake-indexeddb by implementing its own structuredClone logic using v8.serialize(). I assume the reason for this is that, unlike fake-indexeddb, it doesn’t have the luxury of keeping JavaScript objects in memory, and instead has to serialize to SQLite. So although you could argue that it’s cribbing from its training data, it’s also doing something pretty unique in this case.

As for its transaction scheduler, this doesn’t look anything like fake-indexeddb‘s logic, but it does look sensibly designed and is at least readable. Then there’s also of course sqlite-backend.ts which deviates from the only comparable implementation I’m aware of (IndexedDBShim) by having a proper “backend” for the SQL logic rather than mixing SQL into the APIs as IndexedDBShim does (which is a bit hacky in my opinion).

One annoying thing about its coding style is that it doesn’t make much reference to the actual spec. If you read fake-indexeddb or the source code of a browser (especially Ladybird and Servo in my experience), there are often comments quoting the literal spec language. This is great, since the spec is often pseudocode anyway, so it helps the reader to keep track of whether the browser implementation actually matches the spec or not. Claude seemed to avoid this altogether; perhaps relying entirely on the WPTs, or perhaps just not seeing it worth a word-for-word comment.

Another thing I noticed during code review is that the agent fibbed a bit on the pass rate: out of the original test files it targeted, 9 crashed, and so they weren’t counted in the denominator (presumably because it didn’t know how many tests would have run). So the “real” pass rate is actually 92%, if we consider all crashed tests to be failures: 1208 / 1313 (I got the true denominator using wpt.fyi). Although to be fair, 95% is accurate for the test files that ran without crashing.

As a final test, I ran the code against fake-indexeddb‘s own WPT test suite – just to make sure there was no funny business, and the LLM didn’t cherry-pick tests to make itself look good. The two test suites aren’t 1-to-1 – the agent had decided to skip some large but tricky tests like the IDL harness, plus there are the 9 crashed tests mentioned above. So using fake-indexeddb‘s own tests gives us a more accurate way to judge this code against a comparable IndexedDB implementation.

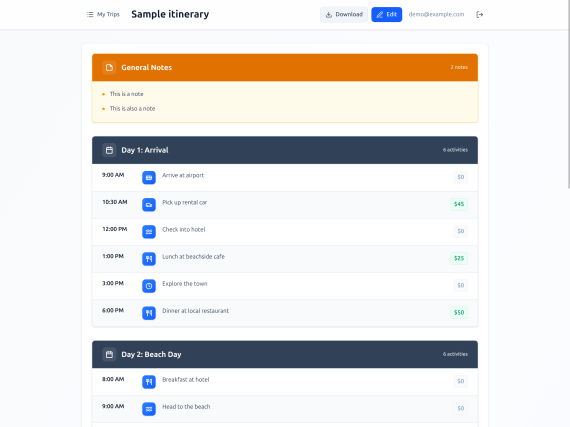

In this more rigorous test, the implementation scores 77.4%, which compares favorably to fake-indexeddb's own 82.8% (only ~5% off). We can also compare it with browsers:

| Implementation |

Version |

Passed |

% |

| Chrome |

144.0.7514.0 |

1651 |

99.9% |

| Firefox |

146.0a1 |

1498 |

90.6% |

| Safari |

231 preview |

1497 |

90.6% |

| Ladybird |

1.0-cde3941d9f |

1426 |

86.3% |

| fake-indexeddb |

6.2.5 |

1369 |

82.8% |

| One-shot |

|

1279 |

77.4% |

77.4% vs 82.8% is really not bad, given that fake-indexeddb is ~10 years old and has 15 contributors. Although I think once you get past roughly ~40%, you have a largely working implementation – many of the WPTs are corner cases or IDL quirks, e.g. whether a property is enumerable/configurable or not.

The one-shot implementation actually passes 30 tests that fake-indexeddb fails, mostly in the zone of IDL harness tests. As for the 88 tests fake-indexeddb passes but the one-shot fails, they are mostly in structured cloning and blob serialization, properties on the IDBCursor object, errors for invalid keys such as detached ArrayBuffers, and other edge cases.

fake-indexeddb‘s WPT tests also ran in 49.2s versus 125.5s for the one-shot implementation (2.5x slower, median of 3 iterations), so there’s definitely room for improvement on performance. Although to be fair, this is comparing an actual persisted SQLite implementation versus in-memory, and boy did I work to optimize fake-indexeddb! I suspect another issue is that it chose a basic setTimeout for task queuing, whereas we used a much more optimal strategy in fake-indexeddb.

Conclusion

I’ve been talking a lot about LLMs recently and how they’ve changed my coding workflow. A large part of my audience has ethical concerns with LLMs around energy use, copyright, the motivations of big tech companies, etc., but my goal has just been to show that these things work. It would be easy to dismiss them if the technology was merely overhyped, but (somewhat sadly for me) it actually works.

This experiment is a good example of how far the latest models like Opus 4.5 have come: given a good enough prompt with clear tests and a specification, you can go to sleep at night and wake up the next morning to a working codebase. Before LLMs, you might have been able to count on two hands the number of actual independent IndexedDB implementations (~5 browser vendors plus fake-indexeddb and IndexedDBShim). Whereas now you can make a new one on-demand.

And it wasn’t that expensive, either: this project used roughly 20% of my weekly budget on a $100 Claude monthly plan, so let’s just say it cost me 7 bucks. Of course some will say that the costs are subsidized and likely to rise (and I won’t dispute that), but still: this is what you pay today. A new IndexedDB implementation can be had for roughly the price of a side of fries at a fancy pub.

So where does this project go next? If this was five years ago, and I had a halway-decent IndexedDB implementation in my hands, I’d open source it, publish to npm, accept PRs, etc. As is, I don’t really see the point. You can have a better version of the code yourself if you make it two-shot rather than one-shot. Or you can think of a better one-shot. Or you can build it on top of LevelDB or Rust or whatever you want. This is kind of what I was getting at in “The fate of ‘small’ open source”, although the definition of “small” seems to be growing every day.

How do I feel about this? Not great, to be honest. I poured tons of time into fake-indexeddb in the last year, using no AI at all (just my own feeble primate intelligence). I enjoyed the experience and don’t regret it, but experiments like this cheapen the efforts I’ve made over the years. It reduces the value of things. I think this is partly why so many of us have a knee-jerk reaction to reject these tools: if they work, then they’re frankly insulting.

However, I don’t think I or anyone else can wish LLMs away. Given their capabilities, it seems pretty clear that they’re going to become a core part of building software in the future. Maybe that’ll be good, maybe it’ll be bad, but their dominance seems inevitable to me now. I’m trying to not be so glum about it, though: if you follow some “AI influencers” like Matt Pocock, Simon Willison, and Steve Yegge, they seem to be having a tremendous amount of fun. As my former Edge colleague Kyle Pflug said recently:

AI-first development is making it once again joyful and eminently possible for anyone to create on the Web. It’s a feeling I’ve missed since View Source became illegible, and a silver lining that’s arriving just in time.

As a middle-aged fuddy-duddy trying to understand what all these kids are excited about, I have to agree. Even if vibe coding doesn’t feel particularly joyful to me right now, I can see why others like it a lot: it gives you a tremendous amount of creative power and dramatically lowers the barrier to entry. Simon Willison predicts that we’ll see a production-grade web browser built by a small team with AI by 2029. I wouldn’t bet against him on that.