I love the js-framework-benchmark. It’s a true open-source success story – a shared benchmark, with contributions from various JavaScript framework authors, widely cited, and used to push the entire JavaScript ecosystem forward. It’s a rare marvel.

That said, the benchmark is so good that it’s sometimes taken as the One True Measure of a web framework’s performance (or maybe even worth!). But like any metric, it has its flaws and limitations. Many of these limitations are well-known among framework authors like myself, but aren’t widely known outside a small group of experts.

In this post, I’d like to both celebrate the js-framework-benchmark for its considerable achievements, while also highlighting some of its quirks and limitations.

The greatness

First off, I want to acknowledge the monumental work that Stefan Krause has put into the js-framework-benchmark. It’s practically a one-man show – if you look into the commit history, it’s clear that Stefan has shouldered the main burden of maintaining the benchmark over time.

This is not a simple feat! A recent subtle issue with Chrome 124 shows just how much work goes into keeping even a simple benchmark humming across major browser releases.

So I don’t want anything in this post to come across as an attack on Stefan or the js-framework-benchmark. I am the king of burning out on open-source projects (PouchDB, Pinafore), so I have no leg to stand on to criticize an open-source maintainer with such tireless dedication. I can only sit in awe of Stefan’s accomplishment. I’m humbled and grateful.

If anything, this post should underscore how utterly the benchmark has succeeded under Stefan’s stewardship. Despite its flaws (as any benchmark would have), the js-framework-benchmark has become almost synonymous with “JavaScript framework performance.” To me, this is almost entirely due to Stefan’s diligence and attention to detail. Under different leadership, the benchmark may have been forgotten by now.

So within that context, I’d like to talk about the things the benchmark doesn’t measure, as well as the things it measures slightly differently from how folks might expect.

What does the benchmark do exactly?

First off, we have to understand what the js-framework-benchmark actually tests.

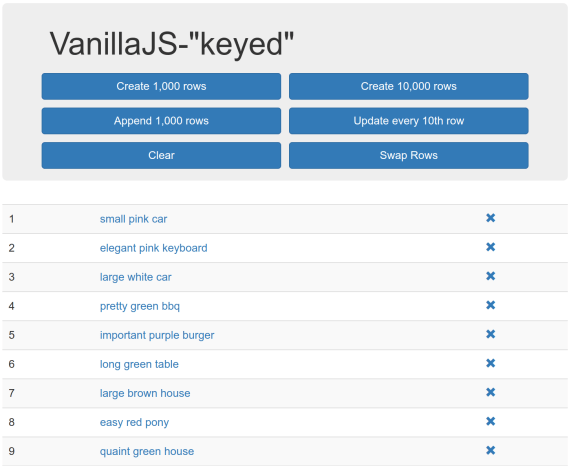

Screenshot of the vanillajs (i.e. baseline) “framework” in the js-framework-benchmark

To oversimplify, the core benchmark is:

- Render a

<table> with up to 10k rows

- Add rows, mutate a row, remove rows, etc.

This is basically it. Frameworks are judged on how fast they can render 10k table rows, mutate a single row, swap some rows around, etc.

If this sounds like a very specific scenario, well… it kind of is. And this is where the main limitations of the benchmark come in. Let’s cover each one separately.

SSR and hydration

Most clearly, the js-framework-benchmark does not measure server-side rendering (SSR) or hydration. It is purely focused on client-side rendering (CSR).

This is fine, by the way! Plenty of web apps are pure-CSR Single-Page Apps (SPAs). And there are other benchmarks that do cover SSR, such as Marko’s isomorphic UI benchmarks.

This is just to say that, for frameworks that almost exclusively focus on the performance benefits they bring to SSR or hydration (such as Qwik or Astro), the js-framework-benchmark is not really going to tell you how they stack up to other frameworks. The main value proposition is just not represented here.

One big component

The js-framework-benchmark typically renders one big component. There are some exceptions, such as the vanillajs-wc “framework” using multiple web components. But in general, most of the frameworks you’ve heard of render one big component containing the entire table and all its rows and cells.

There is nothing inherently wrong with this. However, it means that any per-component overhead (such as the overhead inherent to web components, or the overhead of the framework’s component abstraction) is not captured in the benchmark. And of course, any future optimizations that frameworks might do to reduce per-component overhead will never win points on the js-framework-benchmark.

Again, this is fine. Sometimes the ideal implementation is “one big component.” However, it’s not very common, so this is something to be aware of when reading the benchmark results.

Optimized by framework authors

Framework authors are a competitive bunch. Even framework users are defensive about their chosen framework. So it’s no surprise that what you’re seeing in the js-framework-benchmark has been heavily optimized to put each framework in the best possible light.

Sometimes this is reasonable – after all, the benchmark should try to represent what a competent component author would write. In other cases… it’s more of a gray zone.

I don’t want to demonize any particular framework in this post. So I’m going to call out a few cases I’ve seen of this, including one from the framework I work on (LWC).

- Svelte’s HTML was originally written in an awkward style designed to eliminate the overhead of inserting whitespace text nodes. To Svelte’s credit, the new Svelte v5 code does not have this issue.

- Vue introduced the

v-memo directive (at least in part) to improve their score on the js-framework-benchmark by minimizing the overhead of virtual DOM diffing in the “select row” test (i.e. updating one row out of 1k). However, this could be construed as unfair, since v-memo is an advanced directive that only performance-minded component authors are likely to use. Whereas other frameworks can be just as competitive with only idiomatic component authoring patterns.

- Event delegation is a whole can of worms. Some frameworks (such as Solid and Svelte) do automatic event delegation, which boosts performance without requiring developer intervention. Other frameworks in the benchmark, such as Million and Lit, use manual delegation, which again is a bit unfair because it’s not something a component author will necessarily think to do. (The LWC component uses mild manual event delegation, by placing one listener on each row instead of two.) This can make a big difference in the benchmark, especially since the

vanillajs “framework” (i.e. the baseline) uses event delegation, so you kind of have to do it to be truly competitive, unless you want to be penalized for adding 20k click listeners instead of one.

Again, none of this is necessarily good or bad. Event delegation is a worthy technique, v-memo is a great optimization for those who know to use it, and as a Svelte user I’ve even worked around the whitespace issue myself. Some of these points (such as event delegation) are even noted in the benchmark results. But I’d wager that most folks reading the benchmark are not aware of these subtleties.

10k rows is a lot

The benchmark renders 1k-10k table rows, with 7 elements inside each one. Then it tests mutating, removing, or swapping those rows.

Frameworks that do well on this scenario are (frankly) amazing. However, that doesn’t change the fact that this is a very weird scenario. If you are rendering 8k-80k DOM elements, then you should probably start thinking about pagination or virtualization (or at least content-visibility). Putting that many elements in the same component is also not something you see in most web apps.

Because this is such an atypical scenario, it also exaggerates the benefit of certain optimizations, such as the aforementioned event delegation. If you are attaching one event listener instead of 20k, then yes, you are going to be measurably faster. But should you really ever put yourself in a situation where you’re creating 20k event listeners on 80k DOM elements in the first place?

Chrome-only

One of my biggest pet peeves is when web developers only pay attention to Chrome while ignoring other browsers. Especially in performance discussions, statements like “Such-and-such DOM API is fast” or “The browser is slow at X,” where Chrome is merely implied, really irk me. This is something I railed against in my tenure on the Microsoft Edge team.

Focusing on one browser does kind of make sense in this case, though, since the js-framework-benchmark relies on some advanced Chromium APIs to run the tests. It also makes the results easier to digest, since there’s only one browser in play.

However, Chrome is not the only browser that exists (a fact that may surprise some web developers). So it’s good to be aware that this benchmark has nothing to say about Firefox or Safari performance.

Only measuring re-renders

As mentioned above, the js-framework-benchmark measures client-side rendering. Bundle size and memory usage are tracked as secondary measures, but they are not the main thing being measured, and I rarely see them mentioned. For most people, the runtime metrics are the benchmark.

Additionally, the bootstrap cost of a framework – i.e. the initial cost to execute the framework code itself – is not measured. Combine this with the lack of SSR/hydration coverage, and the js-framework-benchmark probably cannot tell you if a framework will tank your Largest Contentful Paint (LCP) or Total Blocking Time (TBT) scores, since it does not measure the first page load.

However, this lack of coverage for first-render goes even deeper. To avoid variance, the js-framework-benchmark does 5 “warmup” iterations before most tests. This means that many more first-render costs are not measured:

- Pre-JITed (Just-In-Time compilation) performance

- Initial one-time framework costs

For those unaware, JavaScript engines will JIT any code that they detect as “hot” (i.e. frequently executed). By doing 5 warmup iterations, we effectively skip past the pre-JITed phase and measure the JITed code directly. (This is also called “peak performance.”) However, the JITed performance is not necessarily what your users are experiencing, since every user has to experience the pre-JITed code before they can get to the JIT!

This second point above is also important. As mentioned in a previous post, lots of next-gen frameworks use a pattern where they set the innerHTML on a <template> once and then use cloneNode(true) after that. If you profile the js-framework-benchmark, you will find that this initial innerHTML (aka “Parse HTML”) cost is never measured, since it’s part of the one-time setup costs that occur during the “warmup” iterations. This gives these frameworks a slight advantage, since setting innerHTML (among other one-time setup costs) can be expensive.

Putting all this together, I would say that the js-framework-benchmark is comparable to the old DBMon benchmark – it is measuring client-side-heavy scenarios with frequent re-renders. (Think: a spreadsheet app, data dashboard, etc.) This is definitely not a typical use case, so if you are choosing your framework based on the js-framework-benchmark, you may be sorely disappointed if your most important perf metric is LCP, or if your SPA navigations tend to re-render the page from scratch rather than only mutate small parts of the page.

Conclusion

The js-framework-benchmark is amazing. It’s great that we have it, and I have personally used it to track performance improvements in LWC, and to gauge where we stack up against other frameworks.

However, the benchmark is just what it is: a benchmark. It is not real-world user data, it is not data from your own website or web app, and it does not cover every possible definition of the word “performance.”

Like all microbenchmarks, the js-framework-benchmark is useful for some things and completely irrelevant for others. However, because it is so darn good (rare for a microbenchmark!), it has often been taken as gospel, as the One True Measure of a framework’s speed (or its worth).

However, the fault does not really lie with the js-framework-benchmark. It is on us – the web developer community – to write other benchmarks to cover the scenarios that the js-framework-benchmark does not. It’s also on us framework authors to educate framework consumers (who might not have all this arcane knowledge!) about what a benchmark can tell you and what it cannot tell you.

In the browser world, we have several benchmarks: Speedometer, MotionMark, Kraken, SunSpider, Octane, etc. No one would argue that any of these are the One True Benchmark (although Speedometer comes close) – they all measure different things and are useful in different ways. My wish is that someday we could say the same for JavaScript framework benchmarks.

In the meantime, I will continue using and celebrating the js-framework-benchmark, while also being mindful that it is not the final word on web framework performance.